The moment my tiny soldier stopped responding to clicks, I felt a very specific kind of fear.

Not “bug in production” fear.

Not “API returning 500s” fear.

Worse.

Because I did not know what was broken - and I did not even have the vocabulary to describe it properly.

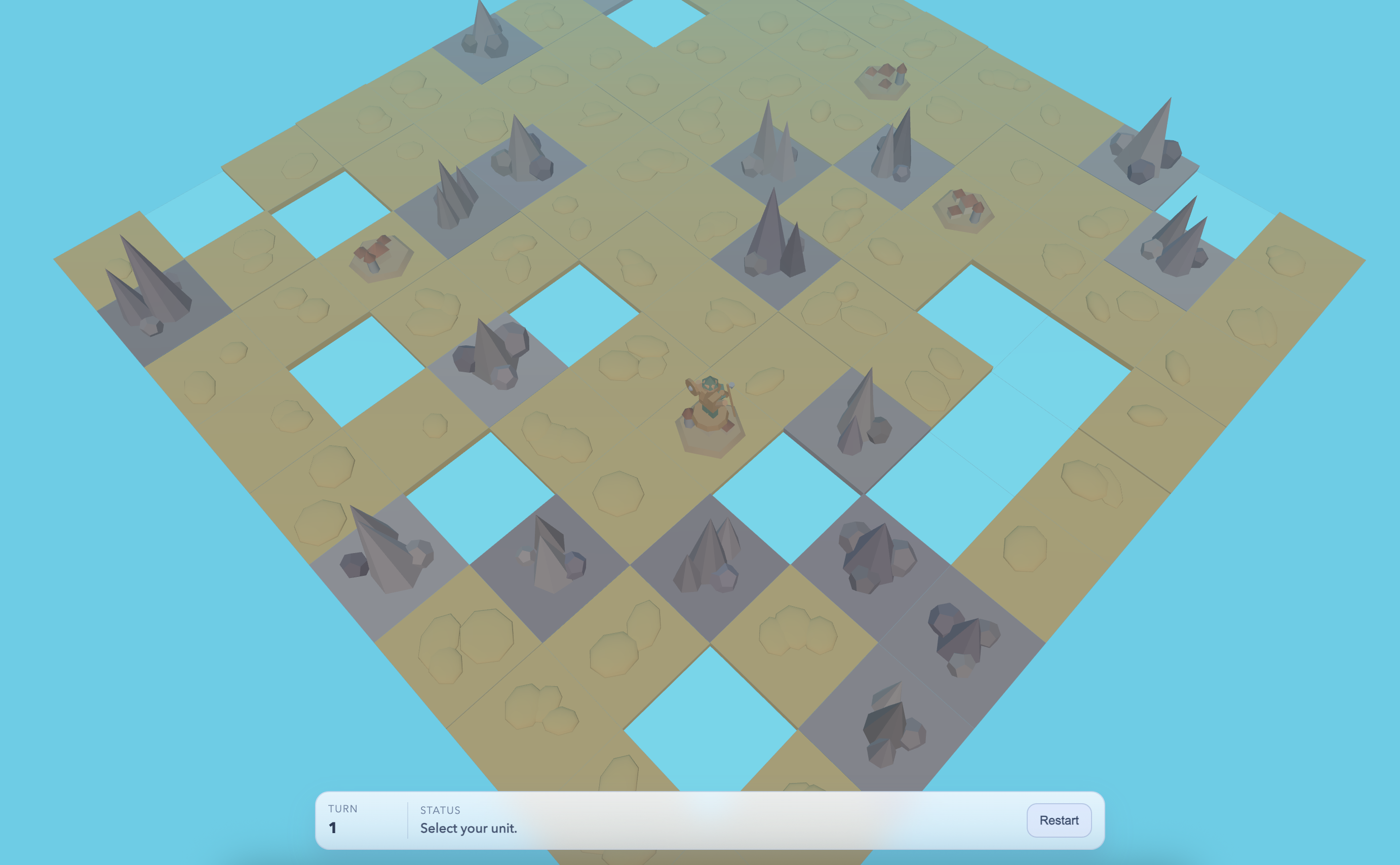

Today I vibe-coded a simple browser strategy game inspired by Polytopia: a stylized, turn-based island prototype with a random map, villages, terrain types (desert, ocean, mountains), one controllable unit, smooth movement, and a minimal HUD - all built in Three.js as an MVP foundation for future expansion.

And the real goal was not “make a game.”

It was the same experiment as before: push the loop, find the edges.

Where will AI fail?

Where will it surprise me?

How far can “idea -> code -> run -> feedback” go in a domain I do not understand?

New domain, different emotions

I have built plenty of backend services: APIs, databases, dashboards, infrastructure glue. Even when code is messy, I can still feel the shape of it. I know what “good direction” looks like.

Game dev was different.

I have not built a game since a university project… maybe 10 years ago. And it mattered more than I expected. The discomfort was not just “new libraries.”

It was deeper:

I did not understand the code at all.

Not “I do not fully understand this framework.”

More like: I could not tell if we were building a house or a haunted maze.

That is the first hidden cost of vibe-coding in a new domain: if you cannot evaluate what is being built, progress feels like walking forward in fog.

So I fell back to the only tactic that always works when I am lost.

Start small. Define the real MVP.

The most important decision was not choosing Three.js or grid size or pathfinding.

It was defining the smallest possible thing that would still answer my real question:

Can I build a web-based strategy game prototype that feels playable?

Not complete. Not balanced. Not pretty.

Playable.

That meant cutting aggressively:

- skip AI (for now)

- skip HP

- skip combat

- move range = 1

- one unit, one action, one “End Turn”

- random map

- that is it

So the MVP became brutally minimal:

- random island map

- terrain types (desert, ocean, mountains)

- villages on passable land

- one controllable unit

- movement that feels smooth

- minimal HUD so you are not totally blind

Just enough to become a foundation for future features - if the foundation did not collapse.

“Ask me questions” was the real design tool

Here is the thing I did not expect: coding was not the hard part.

Taste was.

I was not confident about what “good” looked like, so I kept flipping the workflow:

Instead of asking AI for solutions, I asked it to ask me questions.

Not implementation questions - constraint questions:

- square vs hex? (square)

- random map vs fixed? (random)

- diagonals? (yes)

- what blocks movement? (water + mountains)

- what does “good camera” feel like? (fixed angle, no rotation)

It did not feel like programming. It felt like translating instincts into rules.

And it made something click:

In games, gameplay can be tiny, but feel is everything.

The loop: localhost only

I kept everything local: generate -> run -> refresh.

No deploying. No “push to see if it works.” Just pure verification:

always build -> always run -> verify at http://127.0.0.1:4173/

That made iteration ridiculously fast.

It also revealed something embarrassing:

I forgot to commit regularly.

When I build services, committing is muscle memory. With this, nothing felt “production,” so I kept going without checkpoints.

Until something broke.

And suddenly I really wanted git.

Because when you do not understand the codebase, history becomes your lifeline - not just to revert, but to explain how you got here.

(Also: at one point I looked up and realized I had ~28 terminals open. Very professional.)

The camera saga: a lesson in “feel”

I expected map generation or movement logic to be the hard part.

Instead, the rabbit hole was camera movement:

- “angle feels weird” -> make it diagonal

- “pan feels off” -> rework drag so it feels like you are grabbing the board

- “map is shivering” -> rewrite panning anchored in world space

- zoom should zoom the map, not the page

None of that is complicated on paper.

But tiny input/camera differences completely change whether the game feels playable or annoying.

Backend brain wants correctness.

Game brain wants vibe.

And vibe is not “polish.” Vibe is the product.

UI was simple… and still not simple

Even with a minimal HUD, I iterated more than expected:

- HUD in corner -> annoying

- HUD bottom center -> better

- “End Turn” button in the world near the unit -> much better

- only show it after you used your action -> perfect

That is very “game”: a button can exist, but if it breaks flow, it is wrong.

The terrain glow-up (aka: the taste loop)

I started flat. Then went “okay, make mountains 3D.”

First result was… ugly. Uniform. Toy-like.

So feedback became pure taste:

- make mountains chaotic and varied

- do not elevate the whole tile, only peaks

- add subtle hills to land, keep village tiles flat

- tighten tile spacing, keep simple lines

- switch to pastel palette

- match the ocean background so it reads like an island

- “make it ocean blue like Mauritius”

- and then: “actually make it desert, not grass”

AI can generate shapes fast.

But it needs you to steer style hard.

That is the shift: when the loop is fast enough, your imagination stops being the limiter.

Your taste and focus become the limiter instead.

Then the controls broke, and I panicked

At some point, unit movement stopped behaving.

Clicking a tile did not move the unit to the tile I clicked. It felt random. Haunted. Wrong.

In backend land, bugs have handles:

- logs

- requests

- stack traces

- metrics

Here the bug was just… behavior.

And the behavior was wrong.

What made it stressful was not just the bug - it was that I did not know how to describe it in game terms. I did not know if the issue lived in:

event handling, raycasting, camera math, animation timing, state, pathfinding, or some invisible scene graph detail.

That is when AI stopped feeling like “a team in a box.”

It felt like I was the junior dev, and the senior dev was waiting for me to file a proper bug report.

So I changed tactics.

The fix: stop guessing, make input deterministic

The bug turned out to be a mental-model failure.

I was raycasting whatever meshes happened to be in the scene: tiles, highlights, lines - basically “click what is under the mouse.”

Which is fine… until it is not.

The fix was surprisingly clean (and honestly educational):

Instead of raycasting arbitrary scene meshes, the click was resolved by raycasting a simple board plane, then mapping the hit point to tile coordinates deterministically.

Once that changed, input became stable again.

That moment was the entire day in one lesson:

In a new domain, you do not need to know everything.

You need one mental model you can trust.

Git is hard for humans. For AI it is… a database.

When I finally started committing at meaningful milestones, another idea snapped into focus.

For humans, git history is something we fear touching:

- “do not rewrite history”

- “please no conflicts”

- “rebase without dying”

- “how do I find the commit that changed X?”

For AI, git is not a scary ritual.

Git is structured data.

A tree. A timeline. A dataset.

AI can:

- jump to any point in history without emotional cost

- temporarily inspect the repo at commit N

- compare branches like it is diffing JSON

- read commit messages and reconstruct “what changed and why”

- build a narrative of effort: movement vs map gen vs HUD

It made me see commits differently:

They are not just collaboration hygiene.

They are project memory.

And with AI in the loop, that memory becomes even more valuable.

Commit often is not just a rule. It is future debugging insurance - for human and machine.

I tried something new: dump the thread into the repo

At the end, I asked Codex to document everything in the repository - and dump the thread memory into markdown files inside the repo.

The goal was continuity:

If another AI (or another thread, or future-me) picks this up later, onboarding should be fast:

- what this project is

- what decisions were made

- what exists and why

- what the next steps could be

Because right now, the biggest tax is context loss:

- switching threads

- switching tools

- switching time

Dumping memory into the repo turns that context into an artifact.

A portable brain.

What I learned today

New domains change the emotional texture.

Even with AI building, you still feel lost if you cannot evaluate the shape of what is being built.The MVP definition matters more when you are exploring unknowns.

“Build a game” is infinite.

“Build a playable island prototype with one unit” is a question you can actually answer.Feel is a real requirement.

Camera and input are not polish. They are the product.Commit discipline is not optional when you do not understand the code.

Checkpoints are confidence.Git history becomes more valuable with AI.

For humans it is a safety net.

For AI it is a navigation system.Project memory belongs inside the project.

If you want continuity across threads/tools/time, store context where the work lives.

The real point

The coolest part was not that I made a browser game.

It was that I could enter a completely unfamiliar domain and still keep moving - not because I suddenly became a game developer, but because the loop stayed intact:

small goal -> build -> test -> observe -> adjust

And when the loop breaks (like the control bug), the answer is not “be smarter.”

The answer is: tighten the feedback, tighten the checkpoints, and keep the experiment honest.

Tomorrow I will probably go back to things I understand.

But today was proof that the loop can carry you into weird territory…

as long as you keep the MVP small enough to survive it.